From Wall Hangings to Working Principles: How AI Can Finally Make Purpose, Vision and Values Operational

By Barry Thomas • 10 June 2025 • 4 min read

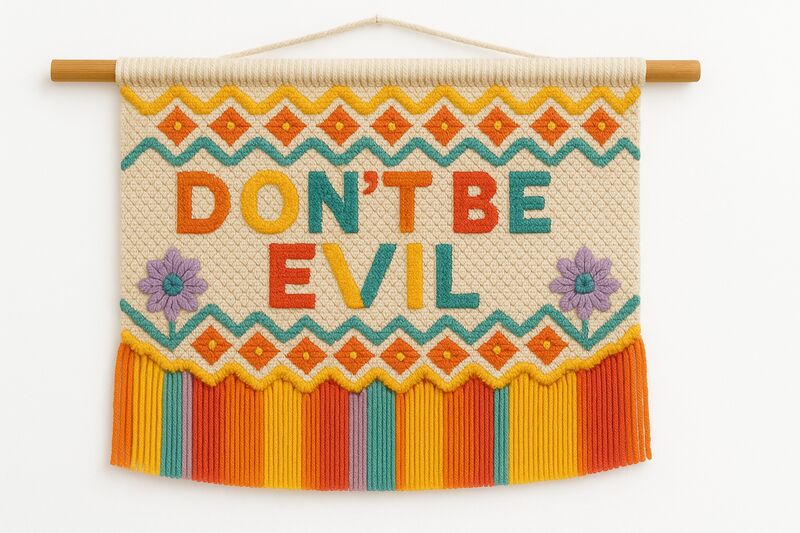

Every organisation has them. Purpose, vision, and values (PVV) statements—carefully crafted, beautifully framed, and largely ignored in daily operations. They adorn reception walls and appear in annual reports, but when was the last time one actually influenced a Tuesday afternoon decision about resource allocation?

This isn’t a failure of intent. Leaders genuinely want their organisations to embody their stated purpose and values. The gap exists because translating abstract principles into thousands of daily decisions requires a kind of omnipresent, context-aware guidance that has never been practically achievable.

Until, potentially, now.

The PVV Implementation Challenge

The fundamental problem with purpose, vision, and values statements is one of scale and specificity. A value like “we put customers first” is meaningful in the abstract but provides little guidance when a project manager faces a specific trade-off between delivery timeline and customer consultation. The distance between principle and practice is vast and filled with context-dependent judgment calls.

Traditional approaches to bridging this gap—training programs, culture initiatives, performance frameworks—all help but suffer from the same limitation: they can’t be present in every moment of decision-making. They’re episodic interventions in what needs to be a continuous process.

AI as a New Lens

What if AI could serve as a real-time interpreter between your organisation’s stated principles and its daily decisions? Not as an enforcer or monitor, but as a thinking partner that helps people connect their work to the organisation’s deeper purpose?

The technology for this is emerging rapidly. Large language models can now understand nuanced context, hold complex instructions in memory, and engage in sophisticated reasoning about how general principles apply to specific situations.

Imagine an AI assistant that has deeply internalised your organisation’s PVV—not just the words, but the intent, the history, the trade-offs your leadership has navigated in defining them. This assistant could:

Help a procurement team evaluate vendors not just on price and capability, but on alignment with organisational values around sustainability or community impact.

Guide a product manager in framing feature decisions through the lens of the company’s stated purpose, surfacing considerations that might otherwise be overlooked.

Assist HR in developing interview questions that genuinely probe for values alignment rather than just technical competence.

Support leaders in crafting communications that authentically connect operational changes to strategic vision.

How It Would Work in Practice

The implementation would involve several layers:

Deep encoding of PVV context. This goes beyond feeding the AI your values statement. It means providing rich context about what each value means in practice—examples of decisions that exemplified the values, cases where values came into tension with each other and how leadership resolved those tensions, and the specific organisational history that shaped the current PVV.

Integration into workflow tools. The AI wouldn’t sit in a separate application that people need to remember to consult. It would be embedded in the tools people already use—project management platforms, communication tools, decision-support systems.

Continuous learning and refinement. As the AI encounters new situations and receives feedback on its guidance, its understanding of how the organisation’s PVV applies in practice would deepen. This creates a living, evolving interpretation of values rather than a static document.

Implementation Considerations

There are some obvious considerations that need acknowledging:

Authenticity matters. If there’s a significant gap between an organisation’s stated values and its actual behaviour, an AI system that takes the stated values seriously will quickly highlight uncomfortable contradictions. This could be feature rather than bug—but only if leadership is prepared to address what surfaces.

Values aren’t algorithms. PVV inherently involve judgment, nuance, and sometimes productive tension between competing goods. The AI needs to be designed to surface these tensions rather than resolve them mechanically. It should enhance human judgment, not replace it.

Privacy and autonomy. There’s a meaningful difference between “a tool that helps me think about values” and “a system that monitors my values compliance.” The design needs to firmly occupy the former category.

Cultural sensitivity. Values interpretation varies across cultural contexts. What “respect” means in practice may differ significantly between teams, locations, and individuals. The system needs to accommodate this diversity rather than imposing a single interpretation.

The Broader Opportunity

What makes this particularly interesting is that it addresses one of the oldest challenges in organisational management: the gap between strategy and execution, between what we say we stand for and how we actually operate.

For decades, this gap has been accepted as somewhat inevitable—a natural consequence of organisational complexity. AI doesn’t eliminate the gap, but it offers a fundamentally new mechanism for narrowing it: persistent, context-aware, individually tailored guidance that connects daily work to organisational purpose.

The organisations that explore this earliest won’t just have better-implemented values. They’ll develop a competitive advantage in coherence—the ability to act consistently with their stated purpose across thousands of daily decisions. In a world where stakeholders increasingly demand authenticity, that coherence may prove to be one of the most valuable capabilities an organisation can develop.

References

Shepherd Thomas regularly writes about the practical applications of AI in organisational strategy. For related reading, see our articles on document management as a foundation for AI success, and the silent killer of AI initiatives: unvoiced resistance.